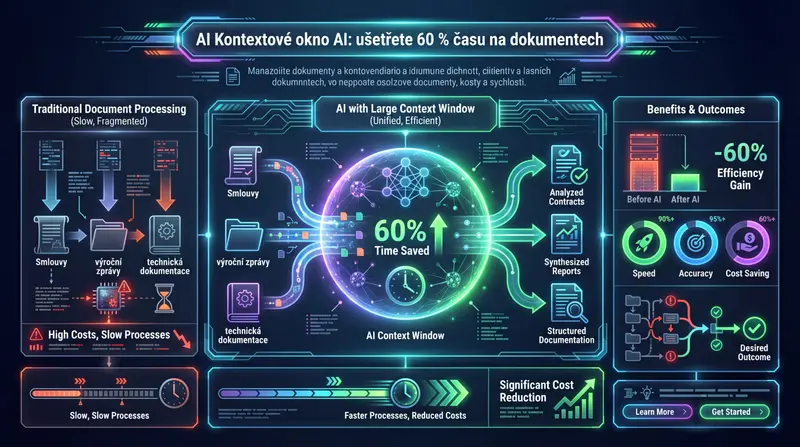

AI Context Window: Save 60% of Time on Documents

Contracts, annual reports, technical documentation — AI can handle them all at once. Learn how a larger context window reduces costs and speeds up your processes.

Vít Šafařík

AI & business productivity

Imagine giving an assistant a task: “Read this contract and tell me where the risks are.” The assistant takes the document, starts reading — and somewhere in the middle forgets what they read at the beginning. The conclusions they hand you are inaccurate. Possibly even dangerous.

That’s exactly what happens when AI hits the limit of its context window.

What Is a Context Window and Why Does Its Size Matter

A context window is the amount of text an AI “sees” at once. Everything that doesn’t fit into this window simply isn’t processed — or you have to mechanically chop documents into pieces and manually stitch the results back together.

A good analogy: a work desk versus an archive cabinet. AI only works with what’s currently on the desk. If the desk is small, you constantly have to shuffle documents back and forth — you waste time, make mistakes, and simply miss part of the information.

A larger context window = a larger desk. An entire contract, an entire annual report, an entire technical manual — all at once, without cutting.

From 4,000 Tokens to a Million: What Happened in Three Years

When ChatGPT launched in November 2022, its context window was 4,000 tokens — roughly 8 pages of text. Today, in early 2026, we work with windows a thousand times larger.

Key milestones:

- May 2023 — Anthropic was the first to break the 100,000 token barrier with Claude. Suddenly you could process an entire book.

- November 2023 — OpenAI’s GPT-4 Turbo reached 128,000 tokens. Claude 2.1 pushed the bar to 200,000.

- February 2024 — Google introduced Gemini 1.5 Pro with one million tokens. Roughly 2,500 pages of text in a single query. A breakthrough moment.

- April 2025 — OpenAI released GPT-4.1 with a million-token context. For the first time, all three major platforms (Google, Anthropic, OpenAI) had 1M-window models.

- November 2025 — Meta released open-source Llama 4 Scout with 10 million tokens. The boundaries shifted by another order of magnitude.

- March 2026 — Claude Opus 4.6 and Sonnet 4.6 offer 1M tokens at standard pricing, with no long-context surcharge.

Where We Are Today: Major Models Overview (March 2026)

| Model | Context Window | Note |

|---|---|---|

| Claude Opus 4.6 / Sonnet 4.6 | 1,000,000 tokens | No long-context surcharge |

| GPT-4.1 (OpenAI) | 1,000,000 tokens | All variants (standard, mini, nano) |

| Gemini 2.5 Pro (Google) | 1,000,000 tokens | Google reports 99.7% accuracy at full range |

| Llama 4 Maverick (Meta) | 1,000,000 tokens | Open-source, self-hostable |

| Llama 4 Scout (Meta) | 10,000,000 tokens | Open-source, currently the largest available context |

| DeepSeek V4 | 1,000,000 tokens | Chinese model with one trillion parameters |

| Grok 3 (xAI) | 1,000,000 tokens | |

| GPT-4o (OpenAI) | 128,000 tokens | Previous generation, still widely used |

| Claude Haiku 4.5 | 200,000 tokens | Fast and affordable model for shorter documents |

For comparison: the average business contract is 20–40 pages. A large company’s annual report is 150–300 pages. With a million-token window, you can process entire software technical documentation at once.

What Matters More Than the Declared Number

An important detail: the declared limit doesn’t mean AI works with the entire text equally reliably. Most models start losing accuracy at 60–70% of their maximum. Information in the middle of a very long document can be overlooked.

The exception is Gemini 2.5 Pro, where Google documents 99.7% accuracy even at a full million tokens. Claude Opus 4.6 shows consistent quality across its entire range in independent tests.

In practice this means: don’t use a million-token context for everything. Use it where it makes sense — for complex documents where key information is scattered across hundreds of pages.

Real-World Cost Savings: Concrete Examples

Legal Documents and Revision Automation

Legal departments typically submit individual sections of a contract to AI separately and manually assemble the results. With a million-token context, the entire contract — including appendices and cross-references — goes into a single query. AI catches discrepancies between clauses on page 3 and page 47 that sequential processing would miss.

Financial Analysis and Due Diligence

Clinical study documentation, annual reports, audit materials — hundreds of pages of data. Previously it was necessary to chop the documents up, process them in chunks, and manually aggregate the results. Today the entire material goes into a single call. Conclusions are more coherent, and the risk of losing context is eliminated.

Technical Manuals and Code Review

Software companies use large context windows to review entire repositories at once. Instead of checking file by file, AI sees how parts of the code interact with each other — and catches bugs that sequential processing would miss.

Where Your Company Is Losing Money

Ask yourself: where in your company does AI process documents longer than 50 pages? Where do you have to manually combine outputs from multiple queries? Where do AI hallucinations cause you to distrust the results?

Those are exactly the places where a larger context window delivers immediate savings.

The most common candidates:

- Legal department — contracts, court filings, regulatory documents

- Finance — annual reports, due diligence, audit reports

- IT and development — technical documentation, code review, security audits

- HR and compliance — internal policies, employment contracts, ISO documentation

An overview of specific AI document processing services can be found on a dedicated page.

What It Costs

Larger context = higher cost per call. Approximate prices for processing one million tokens (March 2026):

- Gemini 2.5 Flash: $0.30 — cheapest option for bulk processing

- GPT-4.1: ~$2 — good price-to-performance ratio

- Claude Sonnet 4.6: $3 — no long-context surcharge

- Claude Opus 4.6: $5 — highest quality

Important: Claude Opus 4.6 and Sonnet 4.6 are the only models that don’t add a surcharge for using the long context. Most other models charge a premium when you exceed the standard limit.

The right strategy is not “always use the largest window.” It’s “use the right model for the right task” — and knowing when a large context will actually save time and money and when it’s simply overpaying.

How to Start: A Process Audit as the First Step

The most valuable first step isn’t choosing a model. It’s an audit: where exactly in your processes does AI run into document limits? Where are you manually assembling results from multiple queries? Where do you distrust the results because AI can’t see the full picture?

Only with this map does it make sense to design solutions.

If you don’t know where to start, or want a concrete analysis for your company, get in touch. Or first take a look at what an AI audit looks like in practice — no commitment, no technical jargon. More process automation tips can also be found on the blog.

A larger desk doesn’t by itself mean better work. But when you’re processing page after page of documents, the difference between 50 and 2,500 pages at once is exactly the one that matters.

Share this article

Found this article helpful? Share it with colleagues who might benefit.